Direct Numerical Simulations of Flame Propagation in Hydrogen-Oxygen Mixtures in Closed Vessels

Tier 1 Science Project

Science

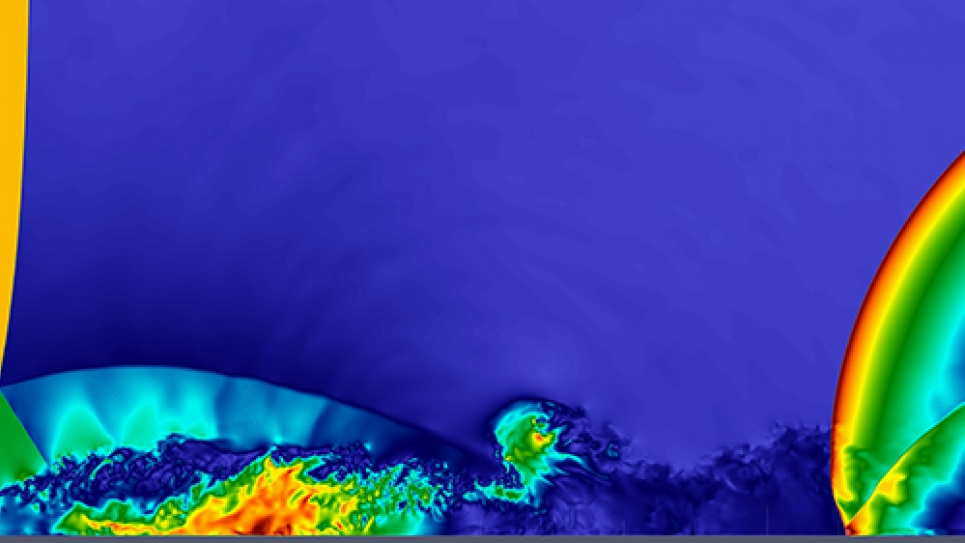

The scientific goal of this project is to perform direct numerical simulations (DNS) of flame propagation experiments in hydrogen-oxygen mixtures in spherical containers. DNS of the entire experimental apparatuses will be carried out to bypass the traditional steps of extracting the laminar flame speed values from the experimental data and to eliminate the associated uncertainty of the measured laminar flame velocity. Instead, the simulations will directly validate the existing chemical reaction mechanisms and thermal and species diffusion models. They will also provide a combined sensitivity analysis of flame acceleration in hydrogen-oxygen mixtures on reaction constants and microscopic transport parameters of the mixture at varying ambient pressure and temperature. This is crucial for carrying out quantitative first-principles prediction of the flame acceleration and the deflagration- to- detonation transition (DDT) in hydrogen mixtures.

Impact: First-principles understanding and quantitative prediction of flame acceleration and DDT in hydrogen is important for the industrial and public safety of hydrogen fuels and certain types of water-cooled nuclear reactors.

Problem Scale

To model a flame propagation in a spherical container with radius R = 10 cm and using the numerical resolution of ∆ = 10μm (good for simulations at 1 atm initial pressure), ~4×1012 grid cells must be reduced to ~5×109 cells by simulating 1/8 of the sphere using adaptive mesh refinement (AMR). The number of time steps of the simulation is dictated by the stability conditions and the flame velocity. The time step at the proposed resolution is dominated by the hydrodynamical Courant condition.

Numerical Methods/Algorithms

High speed combustion and detonation problems are described by the compressible, reactive flow Navier-Stokes equations. The HSCD code includes treatment of shocks as discontinuities, in addition to a description of a continuous flow (Euler terms), dissipative transport (Navier-Stokes terms), and chemical kinetics. Euler fluxes in HSCD are calculated using a second-order accurate, Godunov-type, directional-split conservative scheme with an arbitrary equation of state Riemann solver and a monotone VanLeer reconstruction of left and right states. Viscous, mass diffusion, and heat fluxes are calculated using second-order central differencing and added to Euler fluxes. The present version of HSCD is augmented with the physics required for H2 + O2 combustion.

A distinct feature of the HSCD is a dynamic AMR based on a parallel fully threaded tree (FTT) structure. The mesh refinement is performed on a cell-by-cell basis, that is, each computational cell is refined and unrefined individually.

Parallelization

The FTT allows all numerical operations, including the physics algorithms and the mesh refinement to be performed in parallel. The mesh refinement is performed every fourth time step, after which the mesh is rebalanced across the MPI ranks based on the amount of work required by the cells. The data locality is maintained using a space filling curve approach.

HSCD uses a hybrid OpenMP/MPI programming model on Mira. Optimal performance and strong scaling of numerical algorithms is achieved on Mira with four MPI ranks per node and 16 OpenMP threads per rank. Strong scaling of the entire code has been demonstrated on 32 racks (two-thirds of the machine).

Application Development

- The major development effort will involve the conversion of the numerical algorithms from loop-parallel to task-parallel execution model within the MPI ranks. The researchers plan to use the OpenMP to express the task parallelism while working on Mira. The task-parallel code developed on Mira will then be ported to Theta.

- In HSCD, calculations in each cell can be performed independently from each other and in arbitrary order. Current OpenMP loop parallelism, introducing additional synchronization points. Task parallelism should eliminate these bottlenecks

- On Theta, use MCDRAM for task memory and mesh connectivity information, so tasks will run in MCDRAM, but data not associated with currently running tasks will occupy DRAM if needed

- Optimize/modify I/O algorithms to perform on Lustre

- Code initial and boundary condition setup for closed spherical vessel

Portability

There are no plans to port the HSCD to CPU-GPU architecture during the Theta ESP period. However, thought will be given to formulating the HSCD in terms of some of the computational tasks potentially run with GPU. The new HSCD will retain the portability to multi-core, clusters, and laptops through its existing OpenMP/MPI model.