Non-Intrusive Machine Learning Models for Fluid Simulations

Abstract:

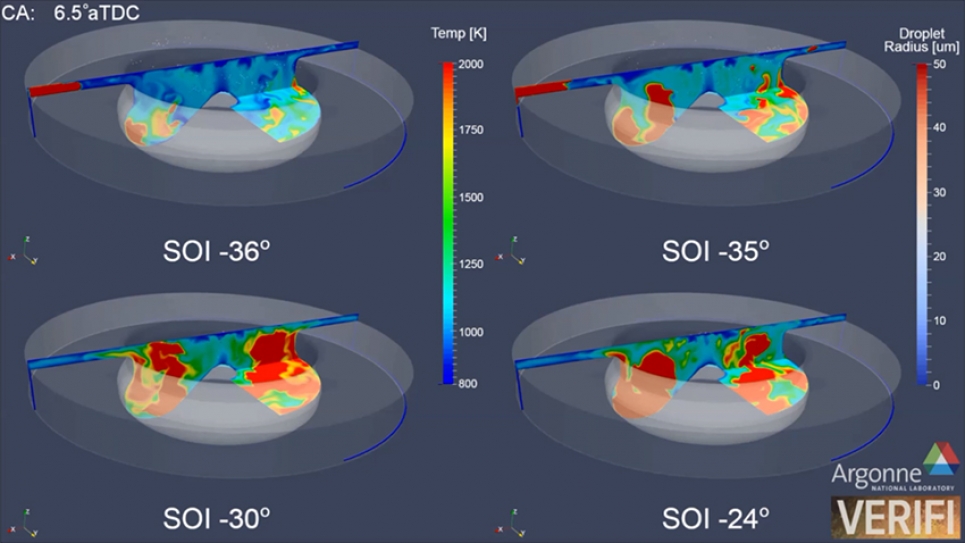

Computer simulation of fluids involve solving non-linear Partial Differential Equations (PDEs) using state of the art numerical methods in scientific computing, often requiring large High-Performance Computing (HPC) resources. However, these highly-resolved fluid simulations are often computationally intractable, due to the non-linear, non-local nature of fluid turbulence. Due to the nature of the data such as multivariate time-series and the converging basis in mathematics and statistics, Machine Learning (ML) can often play an important role in advancing the state of the art. In this talk, I will explore a few different approaches in effectively using ML to develop emulator models for complex physical processes such as fluid turbulence in applications ranging from geophysical turbulence to modeling advanced propulsion systems. In addition, the interpretability of these so-called 'data-driven' models is discussed and the effectiveness of strategies such as mixed-precision learning, pruning, and quantization explored in reducing the inference cost of these models.

Please use this link to attend the virtual seminar: