What drove us to AI?

Whether or not they concur on a definition, most researchers will agree that the impetus for the escalation of AI in scientific research was the influx of massive data sets and the computing power to sift, sort and analyze it.

Not only was the push coming from big corporations brimming with user data, but the tools that drive science were getting more expansive — bigger and better telescopes and accelerators and of course supercomputers, on which they could run larger, multiscale simulations.

“The size of the simulations we are running is so big, the problems that we are trying to solve are getting bigger, so that these AI methods can no longer be seen as a luxury, but as must-have technology,” notes Prasanna Balaprakash, a computer scientist in MCS and ALCF.

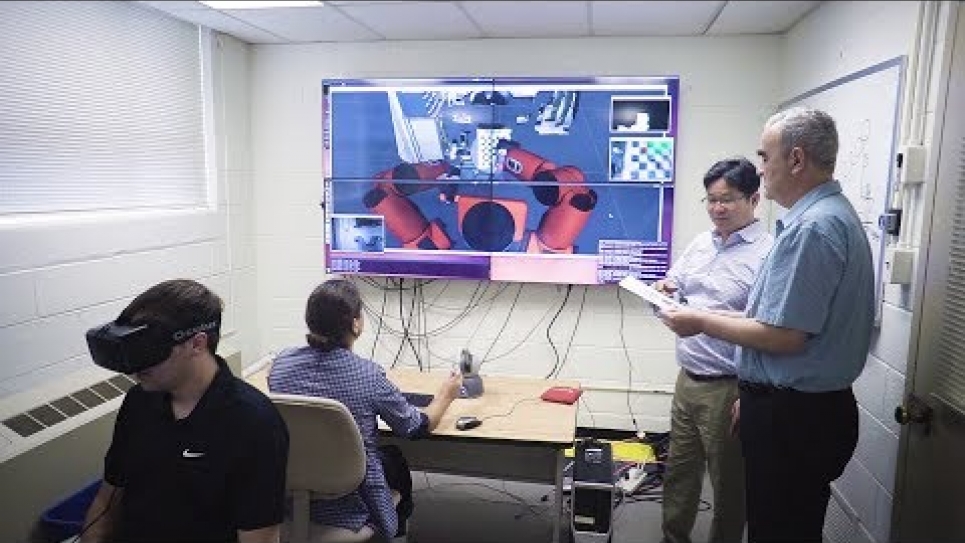

Data and compute size also drove the convergence of more traditional techniques, such as simulation and data analysis, with machine and deep learning. Where analysis of data generated by simulation would eventually lead to changes in an underlying model, that data is now being fed back into machine learning models and used to guide more precise simulations.

“More or less anybody who is doing large-scale computation is adopting an approach that puts machine learning in the middle of this complex computing process and AI will continue to integrate with simulation in new ways,” says Stevens.

“And where the majority of users are in theory-modeling-simulation, they will be integrated with experimentalists on data-intense efforts. So the population of people who will be part of this initiative will be more diverse.”

But while AI is leading to faster time-to-solution and more precise results, the number of data points, parameters and iterations required to get to those results can still prove monumental.

Focused on the automated design and development of scalable algorithms, Balaprakash and his Argonne colleagues are developing new types of AI algorithms and methods to more efficiently solve large-scale problems that deal with different ranges of data. These additions are intended to make existing systems scale better on supercomputers, like those housed at the ALCF; a necessity in the light of exascale computing.

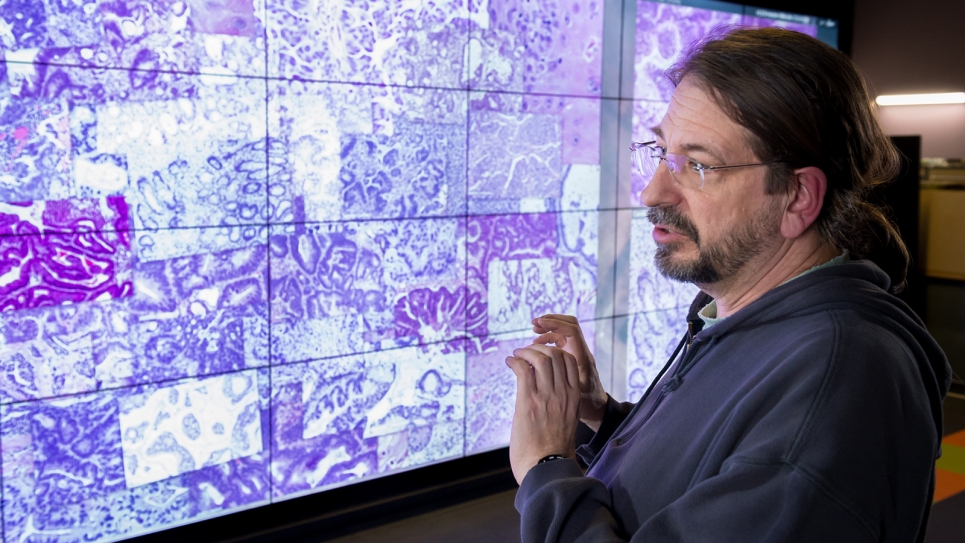

“We are developing an automated machine learning system for a wide range of scientific applications, from analyzing cancer drug data to climate modeling,” says Balaprakash. “One way to speed up a simulation is to replace the computationally expensive part with an AI-based predictive model that can make the simulation faster.”

Industry support

The AI techniques that are expected to drive discovery are only as good as the tech that drives them, making collaboration between industry and the national labs essential.

“Industry is investing a tremendous amount in building up AI tools,” says Taylor. “Their efforts shouldn’t be duplicated, but they should be leveraged. Also, industry comes in with a different perspective, so by working together, the solutions become more robust.”

Argonne has long had relationships with computing manufacturers to deliver a succession of ever-more powerful machines to handle the exponential growth in data size and simulation scale. Its most recent partnership is that with semiconductor chip manufacturer Intel and supercomputer manufacturer Cray to develop the exascale machine Aurora.

But the Laboratory is also collaborating with a host of other industrial partners in the development or provision of everything from chip design to deep learning-enabled video cameras.

One of these, Cerebras, is working with Argonne to test a first-of-its-kind AI accelerator that provides a 100–500 times improvement over existing AI accelerators. As its first U.S. customer, Argonne will deploy the Cerebras CS-1 to enhance scientific AI models for cancer, cosmology, brain imaging and materials science, among others.

The National Science Foundation-funded Array of Things, a partnership between Argonne, the University of Chicago and the City of Chicago, actively seeks commercial vendors to supply technologies for its edge computing network of programmable, multi-sensor devices.

But Argonne and the other national labs are not the only ones to benefit from these collaborations. Companies understand the value in working with such organizations, recognizing that the AI tools developed by the labs, combined with the kinds of large-scale problems they seek to solve, offer industry unique benefits in terms of business transformation and economic growth, explains Balaprakash.

“Companies are interested in working with us because of the type of scientific applications that we have for machine learning,” he adds “What we have is so diverse, it makes them think a lot harder about how to architect a chip or design software for these types of workloads and science applications. It’s a win-win for both of us.”