Scalable System Software for Parallel Programming

As hardware complexity in Leadership Class Facility systems skyrockets, it is not easy for applications to take complete advantage of available system resources and avoid potential bottlenecks. This project aims to improve the performance and productivity of key system software components on leadership-class platforms by studying four different classes of system software: message passing libraries, parallel I/O, data analysis and visualization, and operating systems.

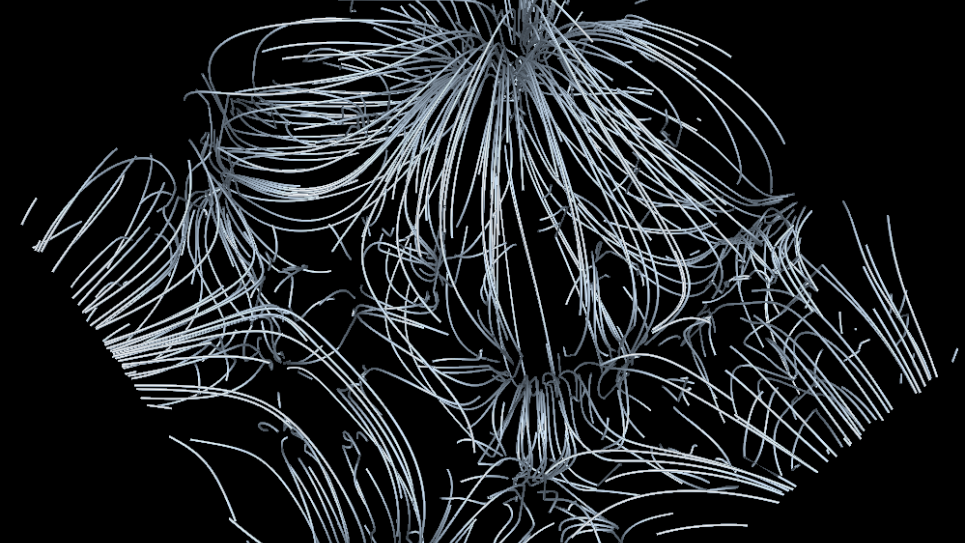

While this work has pursued applications that make use of current petascale systems, initial evaluations have begun on future exascale designs through the performance of million-node simulations of network topologies. The massively parallel ROSS-event simulator scales to significant fractions of Mira, the ALCF’s IBM Blue Gene/Q supercomputer, and allows for the evaluation of future designs by using current hardware.

The project’s Parallel Models and Runtime Systems (PMRS) group is developing a large-scale, resilient data store to tolerate silent memory corruption. This is accomplished through a versioned data repository for applications that protects important memory regions and easily rolls back when necessary. PMRS also works on various runtimes that require large-scale testing to demonstrate their feasibility for production environments, and provides the basis upon which most production MPI libraries are developed. In addition, the group is extending research of the particular proprietary 5D torus topology of the system.

The techniques, algorithms, and performance-measuring methodologies used and developed at scale in this research can be applied to smaller platforms, as well. As a result, the improvements achieved can be immediately deployed throughout the high-performance computing community, improving the nation’s capability for groundbreaking computational science and engineering.