Scalable System Software for Parallel Programming

The Scalable Systems Software for Parallel Programs (SSSPP) project aims to improve the software environment for leadership-class applications through programming models, high-performance I/O, and analysis. In all three areas, researchers are exploring ways to make the existing software environment more productive for computational scientists.

For example, researchers deployed the Darshan lightweight trace tool on IBM Blue Gene/Q computers Vesta and Mira, to collect I/O statistics for significant fractions of the machines. The resultant logs will help generate and validate models of typical leadership-class I/O workloads.

While current work has focused on applications for petascale systems, initial evaluations have begun on future exascale designs through the performance of million-node simulations of network topologies. The massively parallel ROSS event simulator scales to significant fractions of Mira, and allows for the evaluation of future designs by using current hardware. Exascale designs will require fault tolerance, resilience, and power-aware approaches in system software. This research will explore that design space with projects like fault tolerant message passing interface MPI (MPIXFT), multi-level checkpointing (FTI), and power-aware monitoring (MonEQ).

Working with IBM, SSSPP collaborators identified areas for improvement in the MPI library that was installed on Blue Gene/Q computers. The development of a "community edition" of the MPI library, while not officially supported by IBM, will provide MPI-3 features, improved I/O performance, and better scalability for several important MPI routines.

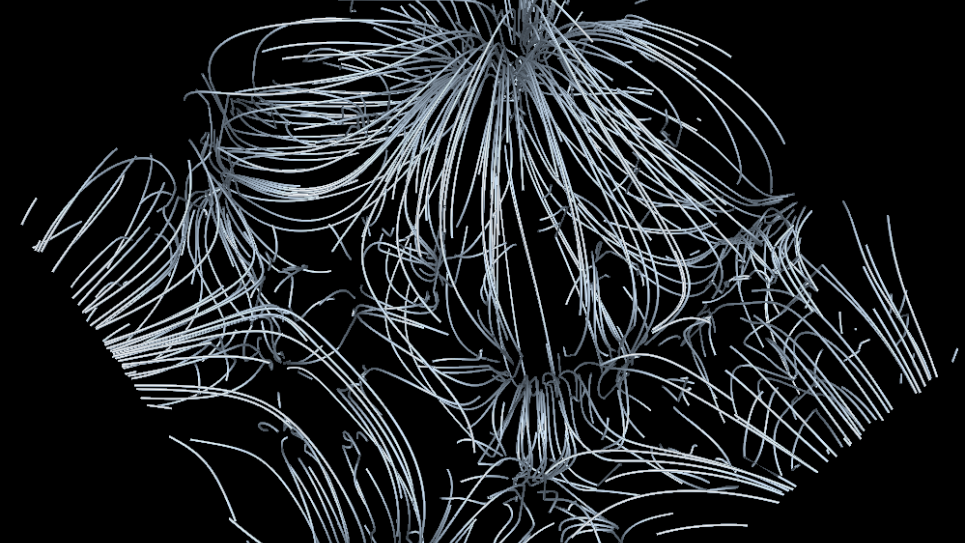

Analysis and visualization efforts helped to develop a new parallel algorithm for computing Delaunay and Voronoi tessellations, which convert discrete point data into a continuous field. Demonstrating both correctness and scalability, the approach suggests that answers can be more readily accessed, and computing resources can produce more science.