Extreme CFD: Fluid Dynamics Projects at ALCF

Kenneth Jansen is a professor from the University of Colorado Boulder with a flair for computational fluid dynamics (CFD). Currently he’s looking into advanced CFD as applied to large-scale simulations.

In addition to his work for the University, Jansen is the P.I. of a team that is working under the auspices of the Argonne Leadership Computing Facility‘s Theta Early Science Program (Theta ESP). He and his colleagues are tackling some of the most complex physics imaginable. They are honing in on the science associated with turbulent flows over aircraft control surfaces, such as the tail assembly and rudder.

According to Jansen, one of the team’s goals is to determine how well suited the architectures of the ALCF’s recently acquired Theta supercomputer and its successor, Aurora are for advanced fluid dynamics simulations. Specifically, the team is interested in flows that are turbulent in nature. Their work will include two different applications: aerodynamic flow control and multiphase flow.

Aerodynamic Flow Control

For most airline pilots, hurtling through the air at close to the speed of sound in a large metal tube weighing anywhere from 75 to 450 tons is usually a matter of routine. Occasionally the weather, mechanical problems, and other factors will intervene. Obviously, pilots want as much control of their airplane as possible no matter what the circumstances.

The rudder or wings of an airliner always come equipped with control surfaces that are designed to handle the various conditions the airplane may encounter in flight. Traditionally the controls have consisted of mechanically activated flaps and rudders. In some designs the control surfaces have to be oversized to handle more extreme events – for example, an engine failure on one side that forces the pilot to fly the plane in a side slip or “catty corner” mode using the rudder.

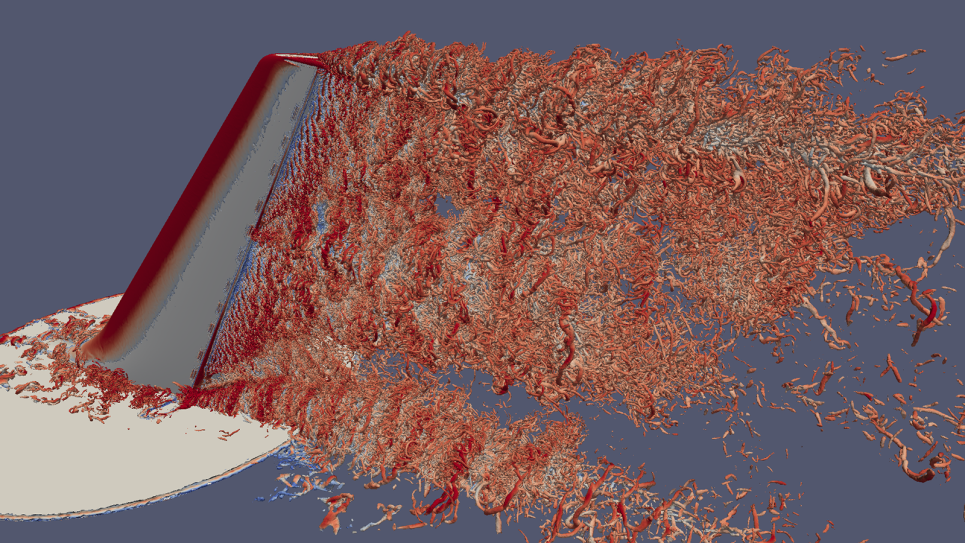

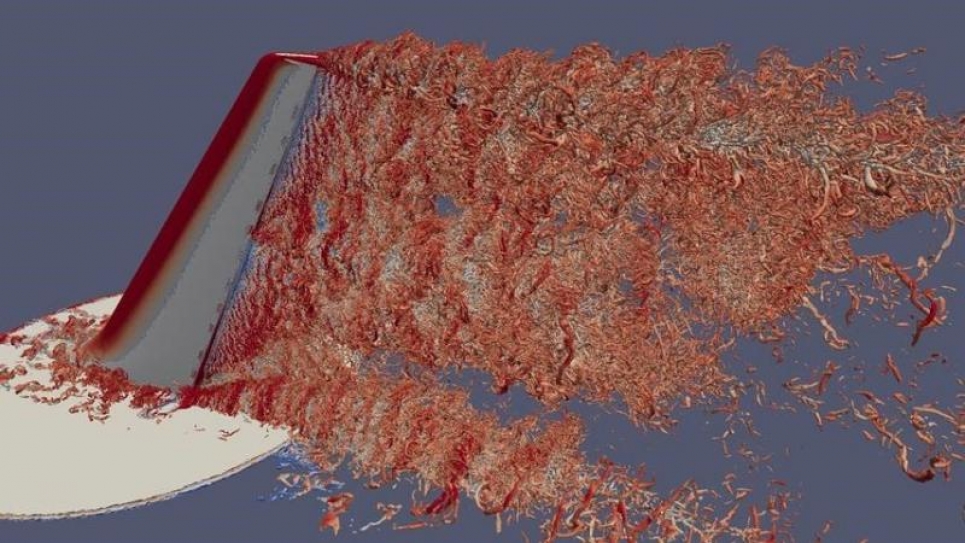

This is heavy-duty work for a typical rudder, which usually has to deal with minimal course corrections or a benign side wind. One approach Jansen and his colleagues are exploring is to add a boost to the rudder’s capabilities. The solution calls for tiny jets to be installed along the rudder’s hinge line to modify airflow and increase the rudder effectiveness. To do this, the team needs to accurately model the turbulence over and around the tail rudder assembly – a major computational challenge.

The little jets have a complex geometry and classic CFD solutions are not very effective for this application because in some regions around the rudder the mesh has to be extremely fine to capture very small dynamics efficiently.

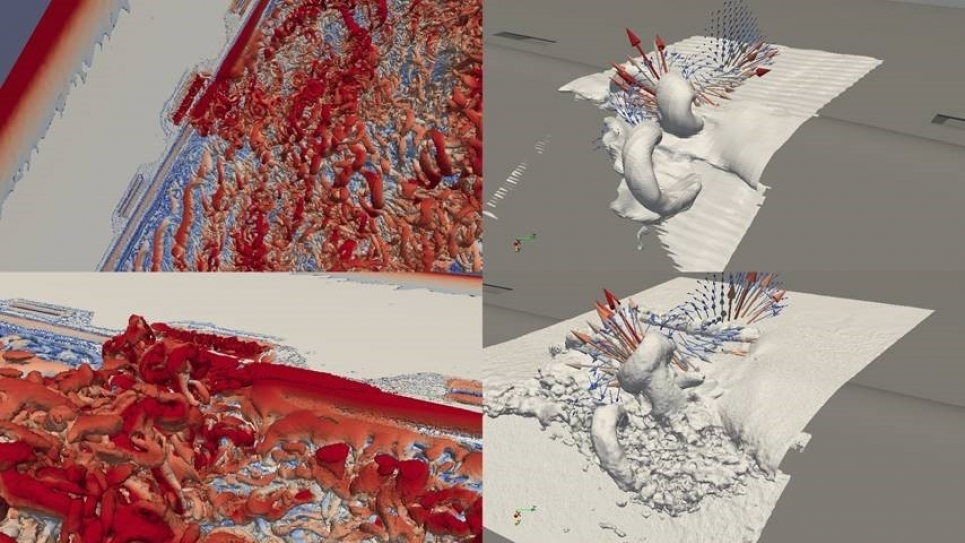

Pictures of simulations already produced on Theta use 5 billion tetrahedrons to model the regions around the tail rudder assembly and out to the wind tunnel walls to make sure the simulation and the empirical data are in agreement.

The simulations the research team is running on Theta are a stepping-stone to far more complex flight scale simulations on Aurora. The next generation supercomputer, based on the Intel Xeon Phi, is powerful enough to move up from lab scale, which is 1/19th geometric scale, to real /flight scale. This will be the first time that elements like this have been simulated at flight scale with this level of fidelity. It also allows the researchers to accelerate their algorithms to take advantage of the capabilities of both Theta and the upcoming Aurora.

This is in line with what Theta is all about – preparing the user community to run their simulations on Aurora. Jansen says they did run the simulations on Mira (the earlier Blue Gene/Q system) but it became clear that the researchers would never get to flight scale – the same was true with Theta, a 9.65 petaflop machine.

To run flight scale simulations, the researchers need far more than the factor of 5 improvement over Mira provided by Theta. A boost in capabilities when Aurora comes online will supply the horsepower to solve the computations necessary to move to flight scale simulations with room to spare.

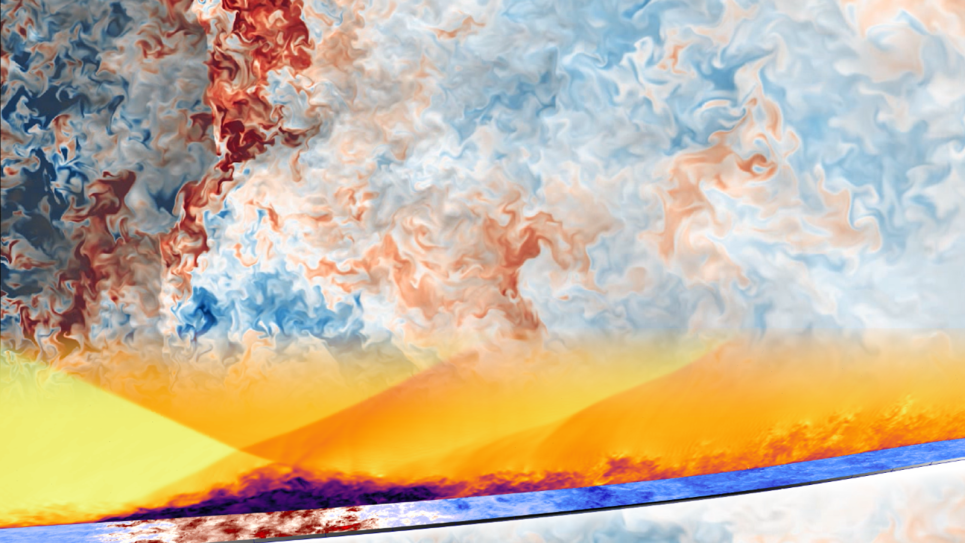

Extreme Multiphase Flow

Another simulation the Theta ESP project team has underway on Theta is also concerned with turbulent flow, but in this case the complexity is compounded by the presence of both gas and liquid and vapor bubbles. Some of the major computational challenges are caused by different fluid densities ranging from 100 and 1000. Solving the physics of the interactions and resolving the differences between the two phases dynamically throughout the simulation is the primary task.

The team is using a mature finite-element flow solver (PHASTA), which is paired with anisotropic adaptive meshing procedures. PHASTA is a parallel, hierarchic (2nd to 5th order accurate), adaptive, stabilized (finite-element) transient analysis solver for compressible or incompressible flows. PHASTA is also being used for the aerodynamics calculations. It’s the same code base and basic method (finite element, adaptive mesh).

PHASTA has multiple specific flow solver methods and modules to handle very different types of flows. Collaborating with Intel at a Theta ESP hands-on workshop, the team worked to tune PHASTA.

In Situ Analytics

Computational power has grown with amazing rapidity, but the file systems and the ability to write out the data have not kept pace. So, the team is doing some interesting research known as in situ analytics. While solving flow equations, at the same time they are actually analyzing the results using alternate threads of the hardware to do data analytics. They are also extracting data for visualization. What formerly would be handled as part of the post processing procedures is now being done live – in situ – as part of the ongoing simulation.

Theta’s Intel Xeon Phi processors have 64 CPU cores supporting up to 4 threads each and some of Theta’s threads are being used to conduct the simulations, while some perform in situ analysis and visualization. No GPUs are involved, which creates a more homogenous computing environment than typical analysis/visualization systems. The move to Theta has resulted in improved vectorization and a larger fraction of the CPU being used efficiently compared to the previous environment. PHASTA, mentioned above, is an example of a typical code used in the Theta ESP program. It brings together representatives from a number of institutions to develop a solution to cutting-edge problems in computational science, targeting next-generation supercomputers. PHASTA has been coded for flat MPI and MPI+X where X is either part-based threads or more fine-grained task-based threads. The latter has been shown to scale to large numbers of compute nodes at better than 75% efficiency on a variety of architectures. The contributors to the project will engage early with Theta and eventually Aurora to make sure that their code runs extremely well on the emerging hardware.

The project works directly with a variety of interested parties who can benefit from its findings. For example, Jansen is collaborating with Boeing on the design of aircraft tail structures. Two Boeing employees are co-PIs on Jansen’s project in the Aurora Early Science Program now underway at ALCF. This helps ensure that the ALCF research is solving problems of interest to Boeing and the HPC user community in general. A number of experts are interested in the fluid science breakthroughs that are developing as a result of the application of the power of Theta’s advanced architecture. This involves members of the basic fluid dynamics community that includes CFD researchers, experimentalists, academia, and companies like Boeing, that are interested in the ALCF’s science output.

By learning more about the fundamental physics revealed by these observations, the researchers will be able to improve their investigations of cutting edge fluid mechanics and move into uncharted territory such as full engineering realism.

This article originally appeared in R&D Magazine.